Informed Practice and Superforecasting: Taking Your Forecasts to the Next Level

“Not all practice improves skill. It needs to be informed practice.”

– Phil Tetlock & Dan Gardner in Superforecasting

In any area of decision-making where uncertainty looms large, accuracy is the gold standard. However, many decision makers often find themselves in a frustrating cycle—sometimes they make the right call, but other times they miss the mark entirely. Inconsistency can be costly. So, what separates those who occasionally succeed from those who reliably deliver top-notch forecasts? The answer lies in informed practice—one of the concepts at the heart of Superforecasting.

What Is Informed Practice?

Informed practice is not just repetition. It’s a deliberate and thoughtful process of learning from each forecast, refining techniques, and continuously updating one’s beliefs based on new information. It’s about approaching forecasting with a Superforecaster’s mindset—an outlook geared toward improvement, with a consistent effort to mitigate one’s cognitive biases.

What Can Forecasters Learn from Superforecasters?

Superforecasters, known for their uncanny forecasting accuracy, exemplify informed practice. They don’t pull numbers out of a hat or look into a crystal ball for answers. For every question they face, they engage in a rigorous process of analysis, reflection, and adjustment. Here’s how informed practice gives them the edge:

1. Learning from Feedback: Superforecasters thrive on feedback. They meticulously track their forecasts, comparing them against the outcomes to identify where they went right and where they missed the mark. This feedback loop is crucial. It allows them to recalibrate their approach and avoid making the same mistakes twice. Over time, this leads to more refined and accurate forecasts.

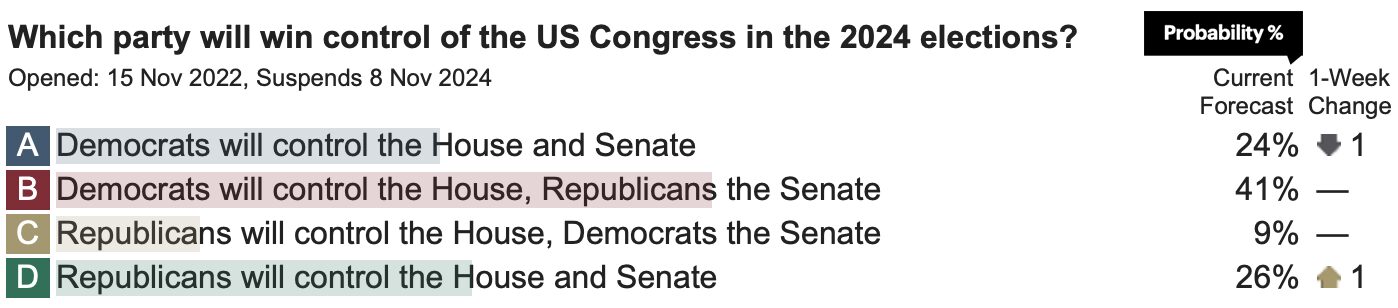

2. Understanding Probability: A key aspect of informed practice is the understanding and effective use of probability. Superforecasters don’t think in black-and-white, yes-or-no terms. They consider a range of possible outcomes and assign probabilities to each. They also update these probabilities as new information becomes available, a process known as Bayesian reasoning. This probabilistic thinking helps them navigate uncertainty with greater precision.

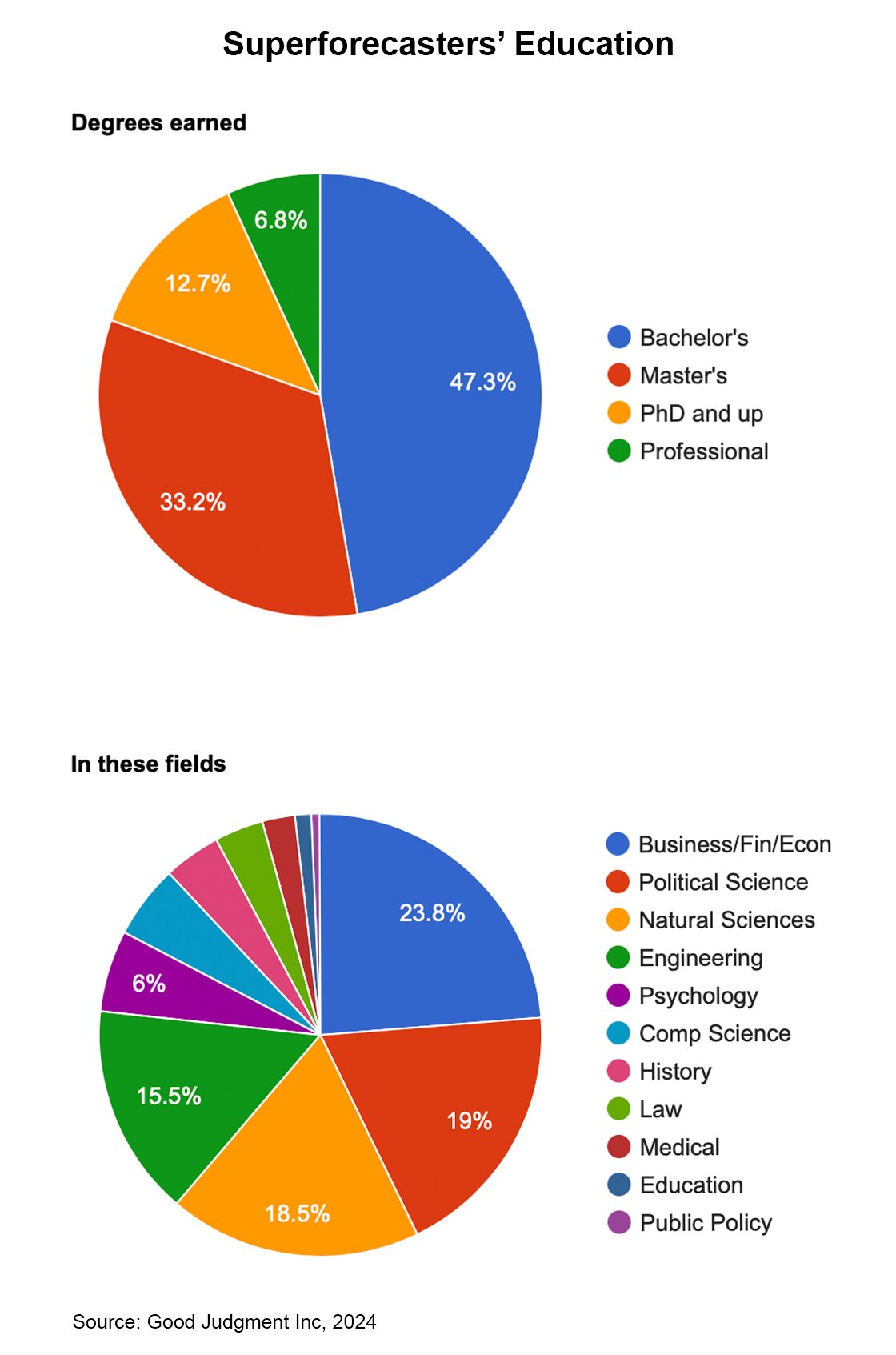

3. Continuous Learning: The world is constantly changing, and so too are the variables that influence forecasts. Superforecasters are voracious learners, continuously updating their knowledge base. They stay informed about the latest developments in multiple areas, thus grounding their forecasts in the most current data and insights.

4. Mitigating Cognitive Biases: Cognitive biases can cloud judgment and lead to poor forecasts. Superforecasters are keenly aware of these biases and actively work to mitigate their impact. Through informed practice, they develop strategies to counteract such biases as overconfidence, anchoring, confirmation bias, and more, to make well-calibrated forecasts.

What Is the Role of Collaboration in This?

Informed practice is not a solitary endeavor. Collaboration with other forecasters is a powerful tool for improving accuracy and keeping track. By engaging in discussions, comparing notes, and challenging each other’s assumptions, forecasters can gain new perspectives and insights. Good Judgment’s Superforecasters work in teams, leveraging the collective intelligence of the group to arrive at superior forecasts.

What Practical Steps Can I Take?

1. Keep Track: Keep a record of your forecasts and compare them with the outcomes. Analyze your hits and misses to identify patterns and areas for improvement.

2. Seek Feedback: Seek out feedback from peers or through forecasting platforms such as GJ Open, which provides performance metrics. Use this feedback to refine your approach.

3. Diversify Your Sources of Information: Regularly update your knowledge on the topics you forecast and seek out diverse sources. This includes staying current with news, research, and expert opinions, including those you disagree with.

4. Practice Probabilistic Thinking: Assign probabilities to your forecasts and be willing to adjust them as new information emerges. This helps you avoid the trap of binary thinking.

5. Challenge Your Assumptions: Regularly question your assumptions and be open to changing your mind. This flexibility is crucial in a rapidly changing world.

6. Get a Head Start with GJ Superforecasting Workshops: Consider enrolling in a Superforecasting workshop. Good Judgment’s workshops, led by Superforecasters and GJ data scientists, leverage our years of experience in the field of elite forecasting as well as new developments in the art and science of decision-making to provide you with structured guidance on improving your forecasting skills. Our practical exercises will boost your informed practice, offering you lifelong benefits.

Informed practice is the cornerstone of good forecasting and one of the secrets behind the success of Superforecasters. By diligently applying the above principles, you can enhance your forecasting skills and make better-informed decisions. See the workshops we offer to help you and your team take your forecasting success to the next level.